The provision of data containing information on personal health is still regarded with a lot of scepticism from the side of European citizens. The growing interest of large companies in medical and patient data is in opposition with the reservations of citizens in view of issues of privacy, anonymization and pseudonymization of those data which are intimately bound to their body. But in the past weeks, the intense debate around a possible Contact Tracing App in times of COVID-19 has again shown that people are willing to cooperate and contribute even sensitive data related to their own personality if this endeavour supports common welfare. Furthermore, the debate has revealed that there need not be a privacy-public welfare trade-off involved, if certain conditions with regard to the development of an app like Pepp-PT (Pan European Privacy Protecting Proximity Tracing) are met. First of all: Encryption methods that enable compliance with the GDPR; the impossibility of de-anonymization of the data collected; and the relinquishment to use central servers (i.e. servers under state control). Second: Transparency around the production process of the app, which enables non-governmental organizations like the German Chaos Computer Club (CCC) or Reporters without Borders to inspect, test and evaluate the source code of the device deposited on GitHub. Both these points create the trust needed to involve a large part of Europe’s population. Third: The involvement of the citizens at several points in the data collection and exchange process. Users of the app need not only to download and activate the app, but have to agree that the Bluetooth device of their smartphone is activated and can be used by the app. If they are presented with the diagnosis of being Corona-positive, they need to confer the right to use this information in the app; only afterwards all the other persons who use this app and have been in contact with the infected persons receive a message about the diagnosis. On this basis, they can decide whether or not they address themselves to a medical authority for further testing. The third point essentially means that users are requested to act as responsible citizens who take up responsibility – and are empowered by their involvement in the decisions within the process.

This latter point – empowerment of the users/data donators – is most often neglected by policy makers as well as by jurisdiction. Data protection laws clearly identify data controllers, but ownership is ill defined. This is a consequence of the fact that data can be copied without loss; customary conceptions of ownership (like in the case of a bicycle) therefore do not apply. Furthermore, in a world where data are quite often a by-product of human activities, the massive data collection by a few monopolists has obscured the sense of data ownership e.g. in users of online media, and thus introduced a feeling of being exploited by such data aggregators as well as an accompanying mistrust in data collection in general. This is also why the case of the Pepp-PT app seems to open new doors for users: It is not only that they donate personal data, they also get something back from the app. What they get back is not more than a tiny little piece of information, which they regard as precious – the information whether they have been in the company of an infected person or not.

In the scenario described above, there is only data involved; with many people using the app, this can even be Big Data. AI comes in if there is more data available: Data about the health of the people using this app, provided by healthcare services, or fitness data collected by apps and devices pertaining to the self-quantification domain. If these data could be aggregated, together with the data collected by the Pepp-PT app, enough information would be available to enable machine learning to answer such questions as: How do personal fitness and the course of the disease relate to each other? Which factors support a quicker cure? Which variables predict complications during the development of the disease? So this is where the full power of Big Data and Artificial Intelligence would unfold for the public good.

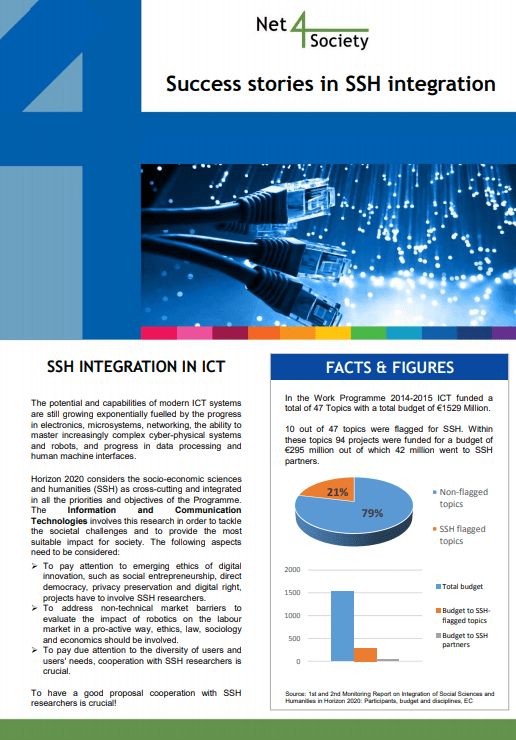

The successful use of AI needs computational power, big data, and the work of capable developers of algorithms. All three ingredients can usually be found in big tech companies, but most often not beyond them. This reflection reveals why a broader societal debate on “AI for the Public Good” has not yet been conducted: We are far from having the necessary infrastructure and financial means in place. But how could this look like? And how could algorithmic innovation for the common welfare be furthered?

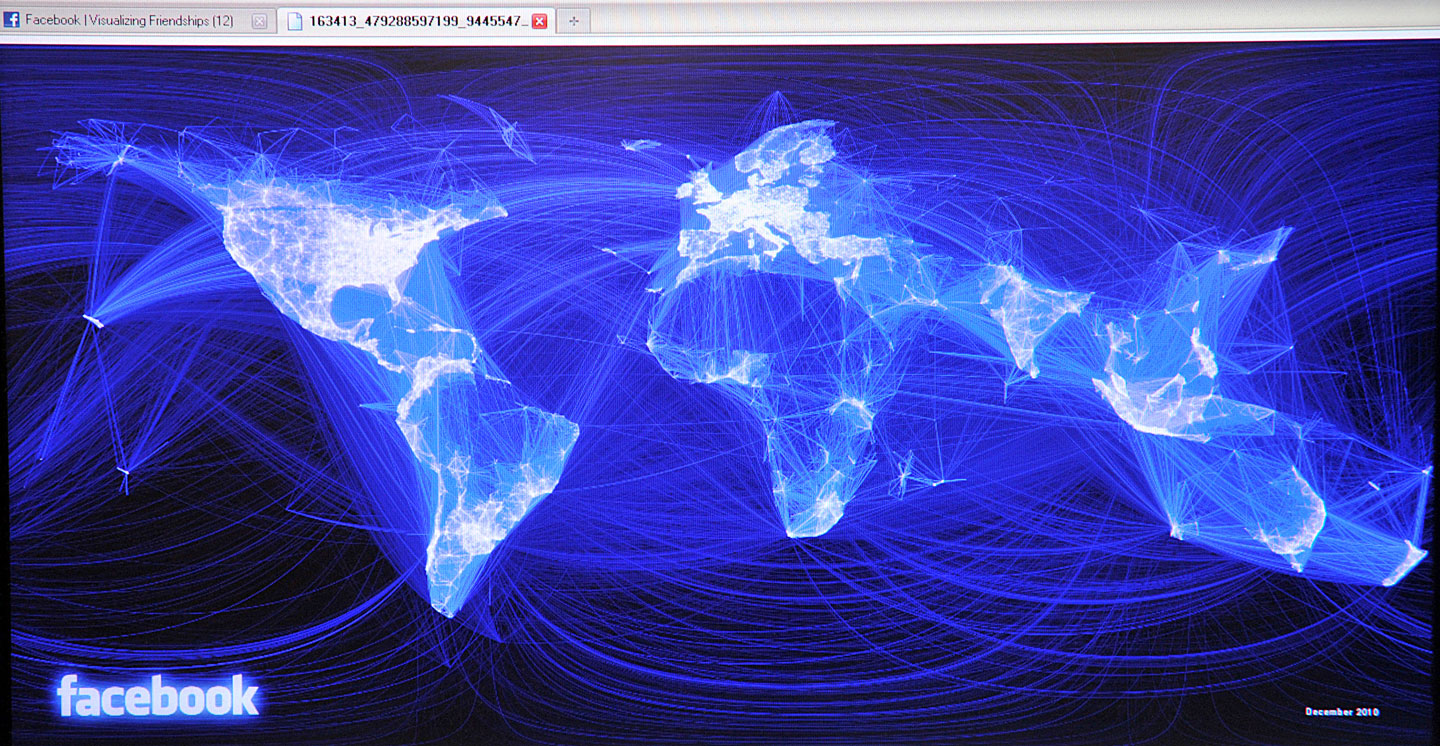

Smart Citizen. Image © Jörg Lehmann 2019

Beyond the case of a COVID Tracing App, the idea of “Smart Cities” provides for a scenario where some of the prerequisites for the use of Big Data and AI serving the public welfare becomes visible. For Smart Cities, data on quite a lot of very important topics are needed: Energy, Mobility, Climate, Environment, Garbage, and so forth. In the concept of Smart Cities, every urban dweller easily can imagine the need for solutions beyond the individual household and her or his contribution to it. What would be the best time to turn on the washing machine because at that certain time power is available in abundance? Where can I find a parking lot? Which alternative transports are available to bring a good from A to B? If rainfalls become rare but heavier, which is the best way for a house to manage the flood and mitigate disasters? Should polders and cisterns be installed to counterbalance phases of drought? How can systems for the reuse of recyclable material and goods replace or complement the current garbage collection service?

Questions like these are negotiated in the concept of Smart Cities, and it immediately becomes obvious that a lot of data are already available (energy, mobility, climate), while others are missing (data on individual energy consumption, mobility, or patterns of daily use of resources). Furthermore, facilities providing the computational power needed as well as relevant algorithms are nowhere to be seen. Ouch. These seem to be the pain points where we as a society have to move forward in order not to relinquish algorithmic innovation to private companies. Data seem not to be the problem; all of us produce them ceaselessly. They could be collected, aggregated and managed within data cooperatives (the German language has the word “Genossenschaft” for it). An example from health research is the Swiss cooperative MIDATA, jointly created in 2015 by ETH Zurich and the Bern University of Applied Sciences. In such data cooperatives, personal data coming from the members of the cooperative are aggregated according to transparent governance principles and state-of-the-art encryption to ensure privacy. Furthermore, citizens (and the communities forming a city) are empowered to steer data use according to their motivations and preferences. These cooperatives can organize access to aggregated data that did not exist in this linked format before, since they consolidate data which have been stored in disparate silos before.

While the issue of missing individual data can be solved by data cooperatives, the infrastructural questions remain. There is the need for computational power as well as data analysis and interpretation platforms or interfaces that enable individual or collective users to obtain insights derived from available Big Data. They form the basis for decisions, for example by predictions on transport and energy use in the upcoming months and years; on the watering of plants in public streets and private gardens, or the usage of parks; and on the communities or facilities in demand of recyclable material. The industry can contribute to such an implementation of Smart Cities by providing applications and interfaces on a pro bono basis; also unions and associations like Data Science for Social Good Berlin might be helpful in data analysis. But such endeavours do not provide sustainable solutions for the lack in infrastructure. Policy makers should therefore promote the model of Smart Cities by funding distributed data infrastructures piloting new data aggregation models; and private foundations should provide the necessary investments in highly performant computers and expensive algorithm developers until a proof-of-concept has convinced governments or the European Commission to provide long-term funding for such independent institutions. Only if all these conditions are met, civil society can move forward and find ways to use AI for the public good.