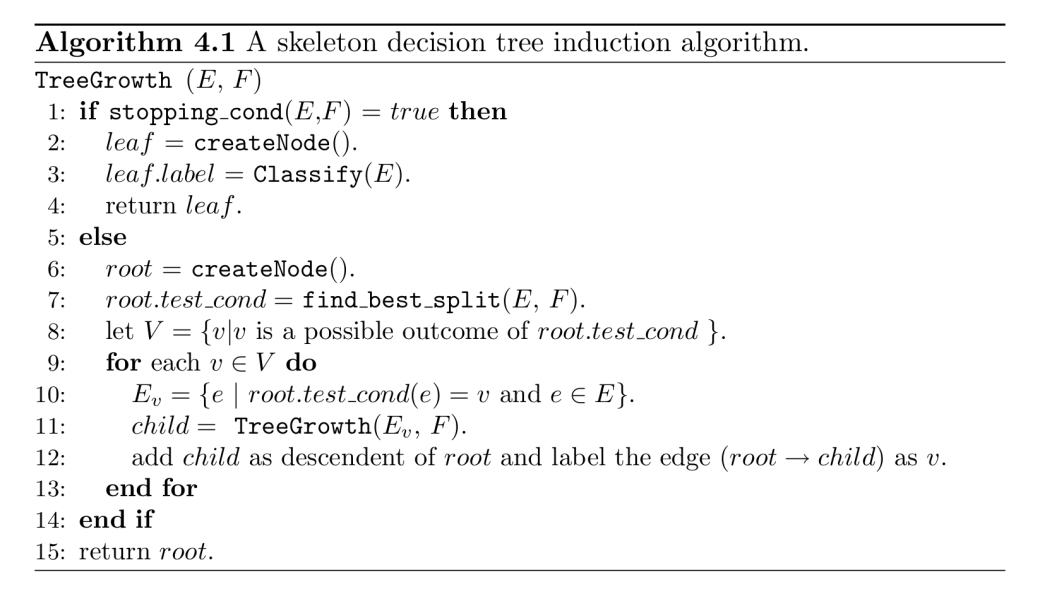

One of the amenities of Big Data (and the neural nets used to mine them) is its potential to reveal patterns of which we were not aware before. “Identity” in Big Data might either be a variable contained in the dataset. This implies classification, and a feature which cannot easily be determined (like some cis-, trans-, inter-, and alia-identities) might have been put into the ‘garbage’ category of the “other”. Or, identity might arise from a combination of features that was unknown beforehand. This was the case in a study which claimed that neural networks are able to detect sexual orientation from facial images. The claim did not go unanswered; a recent examination of this study by Google researchers exposed that differences in culture, rather than facial structures were the features responsible for the result. Therefore, features that can easily be changed – like makeup, eyeshadow, facial hair, or glasses – were underestimated by the authors of the study.

The debate between these data analysts exposes insights well-known to humanists and social scientists. Identities differ in context; depending on the situation in which she or he is, a person may say “I am a mother of three children”, “I am a vegan”, or “I am a Muslim”. In fields marked by strong tensions and polarizations, identity statements can come close to confessions, and it might be wise to carefully deliberate about whether it is opportune to either provide the one or the other answer: “I am a British”, “I am an European”.

It is not without irony that it is easy to list several identities that have been important throughout the past few centuries. Clan, tribe, nation, heritage, sect, kinship, blood, race – this is the typical stuff ethnographers and historians work on. Beyond the ones just named, identities like family, religion, culture, and gender are currently intensely debated in our postmodern, globalised world. Think of the discussions about cultural identities in a world characterized by migration; and think of gender identity as one of the examples which only recently has split itself into new forms and created a new vocabulary that tries to grasp the new changeableness: genderfluid, cisgender, transgender, agender, genderqueer, non-binary, two-spirit, etc.

It is obvious that identity is not a stable category. This is the irony of identity – the promise of an identical self dissolves itself in time and space, and any trial to isolate, fix and homogenise an identity is doomed to failure. In postmodernity, identities are rather constructed out of interactions with the social environment – through constant negotiations, distinction and recognition, exclusion and belonging. Mutations and transformations are the expression of the tensions between vital elements that characterize identities. The path from Descartes’ “Cogito, ergo sum” to Rimbaud’s “I is another” is one of the best-known examples for such a transformative process.

Humanists and social scientists are experts in providing thick descriptions and large contexts in which identities can be located. They are used to relate the different resources to each other which feed into identities, and they are capable to build the contexts in which the relevant features and the relationships between them are embedded. In view of these potentials it is astonishing that citizens with such an academic background do not speak with confidence of the power of their methods and mix themselves into Big Data debates about such elusive concepts like “identities”.

ERCIM News No. 111 has just been published at

ERCIM News No. 111 has just been published at